The Growth Club

How to Validate Demand For Your Next Offer Before You Spend Months Building It

After her launch produced nine sales, Priya Sharma made herself one promise.

She would never spend four months building something again without knowing first that people would actually pay for it.

Not that they'd engage with the free content around it. Not that they'd tell her in a survey they were interested. Not that they'd reply to an email saying they needed exactly this.

That they would open their wallets and pay.

Because here's what Priya had learned the hard way. The distance between enthusiastic engagement and an actual purchase is enormous. Her launch had generated plenty of the former and almost none of the latter. Her audience had liked her content, shared her posts, replied to her emails, and then declined to buy in numbers that made economic sense.

Enthusiasm is not demand. Demand is what happens when someone hands you money.

Before she built anything new she needed to know the demand was real. And she needed to know it cheaply, quickly, and without committing months of her life to finding out.

The Four Month Problem

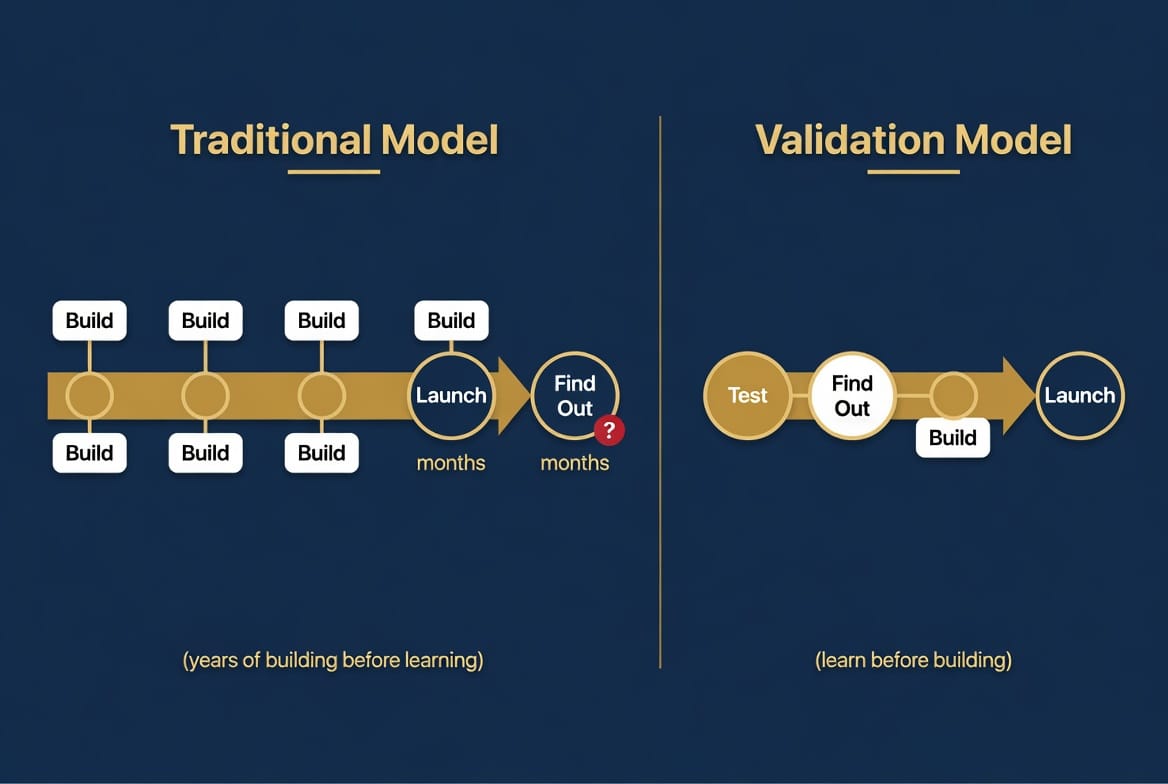

The traditional course creation model has a specific and serious structural flaw.

It asks you to make your largest investment, months of time and creative energy, before you have any confirmation that the market will pay for what you're building.

You identify a topic you're qualified to teach. You plan the curriculum. You record the modules. You build the assets. You set up the tech. You write the sales page. Then you launch and find out whether anyone wants it.

If it sells, great. If it doesn't, you've spent four months and potentially thousands of dollars in tools, equipment, and ad spend finding out that the demand either wasn't there or wasn't strong enough at your price point.

Priya had lived that experience. Eleven weeks of sustained effort, nine sales, and a course she now had to support and update regardless of whether she ever sold another copy.

The problem wasn't that she had built the wrong thing. The problem was the sequence. She had built first and validated second. By the time she found out what the market actually thought, she had already made the full investment.

Flipping that sequence, validating first and building second, changes the risk profile of the entire business completely.

What Real Validation Looks Like

There's only one form of validation that actually tells you whether demand exists.

A purchase.

Not a survey response. Not a thumbs up on a poll. Not a comment saying "I would definitely buy this." Not an email reply saying "I've been waiting for something like this."

An actual transaction. A real person making a real decision to spend real money on a specific solution to a specific problem.

Everything else is data about interest. Interest and buying behavior are not the same thing and the gap between them is where most course creators get burned.

Priya's audience had shown enormous interest in her topic. The free content performed well. The engagement was genuine. None of that had translated into purchase behavior at the moment of the ask because interest without a prior buying decision doesn't predict purchase conversion the way most people assume it does.

The only way to know whether someone will pay $197 for a course is to find out whether they will pay something for something related to it first.

That's the core logic behind the low-ticket validation test. A product priced between $7 and $47 that solves one specific problem in your space. Specific enough that the right person sees it and immediately thinks "that's exactly what I need right now." Priced low enough that the buying decision requires seconds rather than days of deliberation.

The conversion rate on that product, run to cold traffic, tells you everything you need to know about whether real demand exists before you invest months building the full version.

Why Cold Traffic Is the Only Meaningful Test

Here's a mistake Priya almost made when she first heard about the validation test concept.

She considered running her test to her existing email list.

That would have told her almost nothing useful.

Her existing list had a specific and well-documented behavior pattern: they consumed free content and didn't buy things. Running a paid offer to them would have produced results contaminated by that existing relationship. Whatever they did or didn't do would reflect the history of that relationship as much as the actual demand for the offer.

Cold traffic, people who have never heard of her and are encountering her for the first time through a paid ad, is the only audience that gives you a clean signal.

When a stranger with no prior relationship to you pays $27 for something you built, that's demand. They have no goodwill toward you to draw on. They have no relationship history influencing their decision. They saw an offer that addressed a problem they were already trying to solve and decided it was worth paying for.

That's the signal you need before spending four months building a course.

Priya ran her validation test to cold traffic on Meta for five days with a budget of $200. Forty dollars a day. She was testing one specific offer: a $27 guide addressing a narrow, specific problem her ideal clients faced in the early stages of building their business.

The page converted at 2.8 percent on cold traffic. She made 14 sales and recovered $378 from a $200 test. Her front-end revenue covered her ad spend before she had written a single course module.

More importantly she had confirmed something she had never been able to confirm before her first launch. Real strangers, with no prior relationship to her, were willing to open their wallets for this specific type of solution at this specific price point.

That's a completely different foundation to build on.

What the Validation Test Tells You Beyond Whether People Buy

The purchase data from a validation test tells you more than just whether demand exists.

It tells you who is buying and why.

When Priya looked at the people who had purchased her $27 guide she did something she hadn't done before her first launch. She surveyed them immediately after purchase with three simple questions. What specific problem were you hoping this would help you solve? What almost stopped you from buying? What would you most want to learn about this topic if you could ask me anything?

The answers to those three questions were more valuable than anything her four months of course creation had produced.

The language her buyers used to describe their problem became the copy for her next product. The things that almost stopped them from buying told her exactly which objections to address in her sales page. The things they most wanted to learn became the curriculum outline for the full course she was now considering building.

She hadn't surveyed her existing audience and gotten polite responses shaped by their relationship with her. She had surveyed actual buyers who had self-selected by paying money. Their words were unfiltered by social niceties or the desire to be encouraging to someone they liked.

That kind of data is worth more than any amount of pre-launch research. It comes only from people who have already demonstrated they will pay for what you offer.

The Specific Problem the $27 Product Needs to Solve

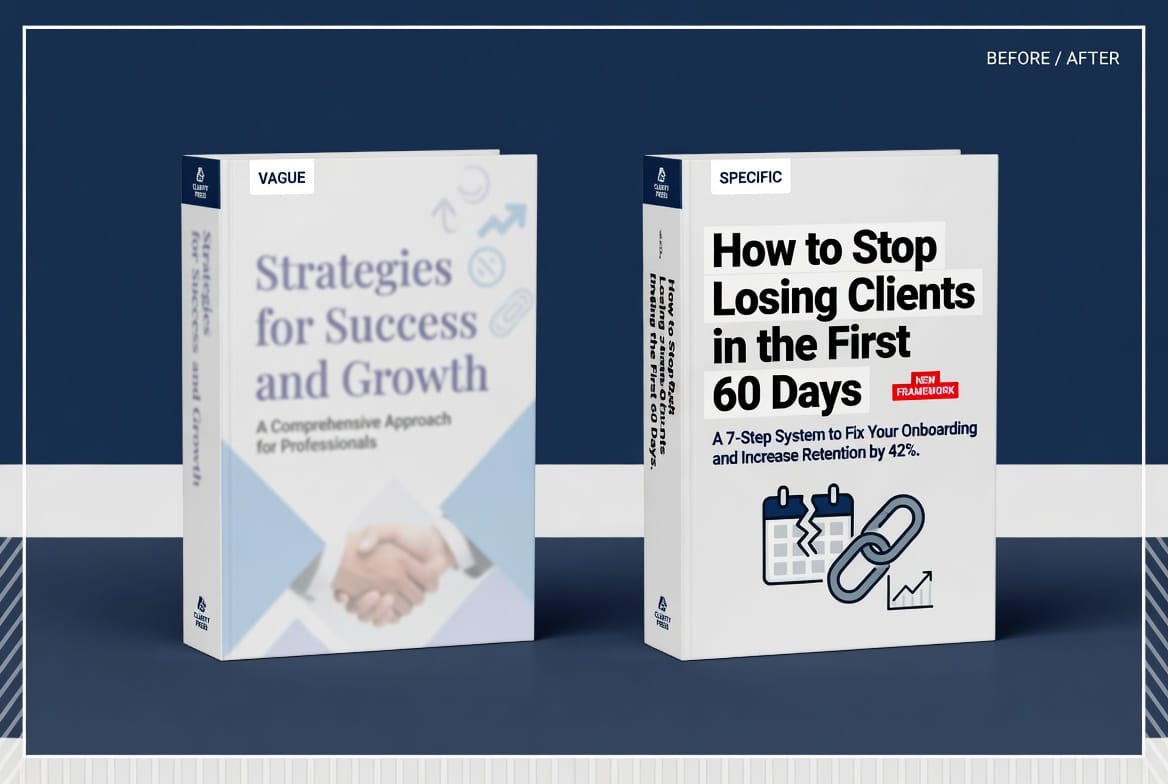

Not every low-ticket product makes an effective validation test. The specificity of the offer is what determines whether the test gives you meaningful data.

A product called "The Complete Guide to Building Your Coaching Business" is too broad to be a useful validation test. It doesn't tell you which specific problem your audience will pay to solve. It attracts a diffuse audience with varied needs and gives you ambiguous data about what to build next.

A product called "The Exact Framework I Used to Land My First Three Paying Clients Without a Big Audience or a Sales Call" is specific enough. It names a specific person, a specific problem, a specific desired outcome. When that converts well on cold traffic it tells you something precise about what your audience will pay for.

Priya's validated $27 guide addressed one specific early-stage business challenge in highly specific language. It wasn't her biggest idea. It wasn't the most comprehensive thing she could have built. It was the most specific solution to the most acute problem her ideal client was experiencing right now.

That specificity is what produced a 2.8 percent cold traffic conversion rate. A broader, more comprehensive offer would have converted lower and told her less.

What Happens to the Course You Already Built

If you've already been through a disappointing launch and you have a course sitting there that didn't sell the way you hoped, the validation model doesn't require you to throw it away.

It requires you to reposition it.

The course you built isn't the problem. It's a back-end asset waiting for the right front end to feed it. A $27 product that validates demand in your space and builds a list of buyers is the front end. Your existing course is what those buyers naturally move toward once they've consumed the front-end product and want to go further.

Priya's first course, the one that made nine sales in four months of build time, became the upsell behind her validated $27 guide eight months later. The same content. The same modules. The same workbooks. Just positioned differently: not as the cold ask to a barely-warm audience, but as the natural next step for someone who had already bought, already gotten value, and already decided they wanted more.

In its new position it converted at 22 percent to front-end buyers. The same course that had converted at a fraction of a percent to cold traffic during the launch was converting at 22 percent to buyers who had already made one purchasing decision about her.

The course was never the problem. The audience it was being presented to was the problem. Change the audience and the same content performs completely differently.

How to use your existing course as the back end of a front-end funnel, and how to structure the whole system so it builds your buyer list, covers its own costs, and sets up your next launch to perform nothing like your last one, is exactly what Part 3 covers.